MCP vs Custom Tool Integration: When Standards Beat Custom Code

MCP has 97M+ downloads. But enterprise agents often need custom middleware. Here's how to decide.

MCP vs Custom Tool Integration: When Standards Beat Custom Code

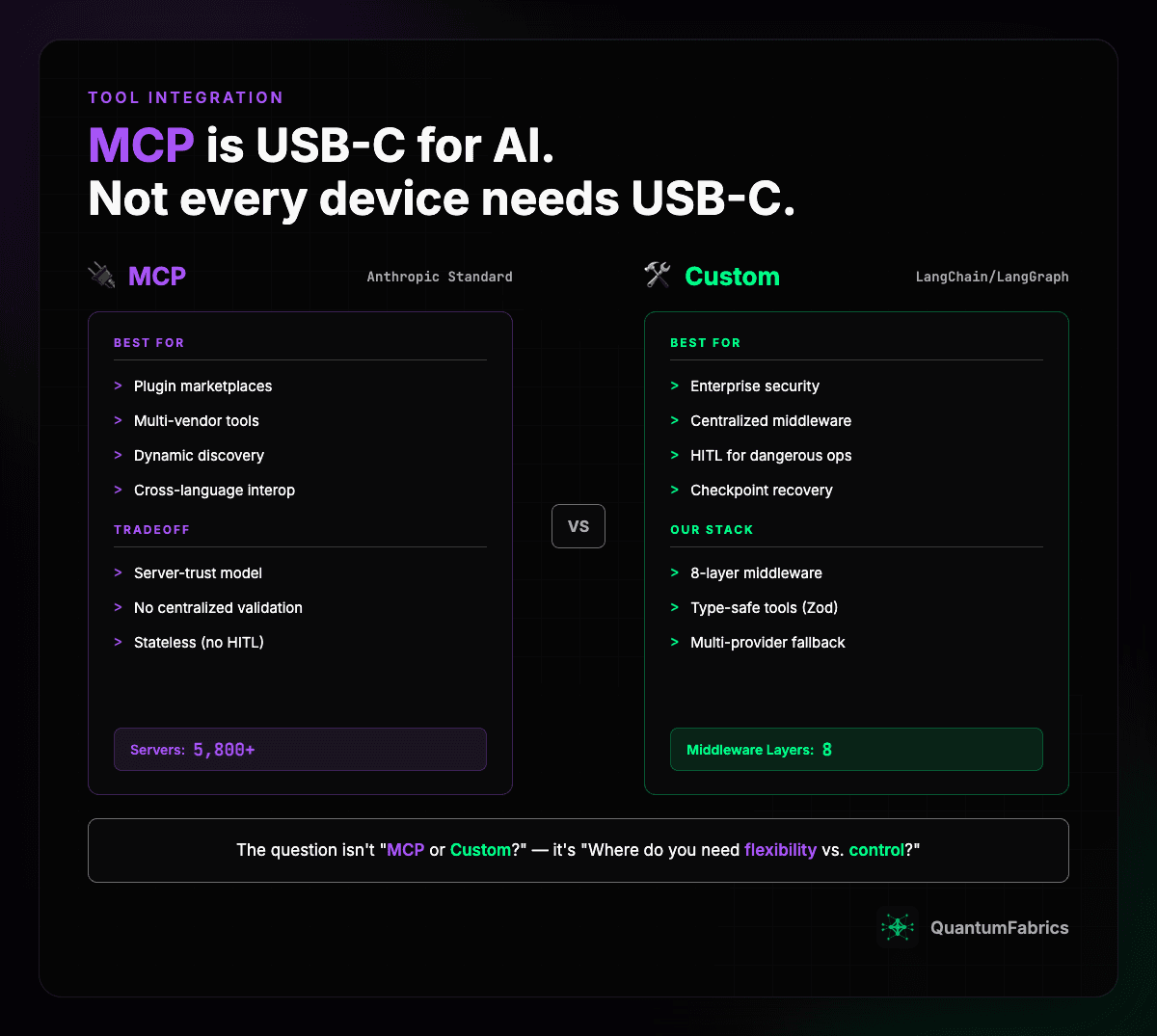

MCP is USB-C for AI tools. But not every device needs USB-C.

The MCP Explosion

The Model Context Protocol has taken over AI tool integration. The numbers speak for themselves:

- 97M+ monthly SDK downloads

- 5,800+ MCP servers available

- 300+ MCP clients in production

- Backed by Anthropic, OpenAI, Google, Microsoft

- Major deployments at Block, Bloomberg, Amazon

IDEs like Cursor and Windsurf have one-click MCP setup. It's becoming the default. So why would anyone build custom tool integration?

The Core Difference: Build-Time vs Runtime Discovery

Before diving into tradeoffs, understand the fundamental architectural split.

Custom tools (what we use): Tools are defined in code and loaded into the agent's system prompt at startup. The agent knows its full toolset before it runs. It's a closed, known set — every tool is type-checked, middleware-wrapped, and tested before deployment.

MCP tools: Discovery happens at runtime via a JSON-RPC protocol. The agent connects to MCP servers it doesn't know in advance, sends a tools/list request, and gets back self-describing tools with names, descriptions, and input schemas. Servers can even push notifications/tools/list_changed to signal new tools appearing mid-session.

The practical impact: with MCP, a new server can be added without redeploying your agent — just point it at a new URL. With custom tools, every change requires a code update. That's the flexibility MCP buys you. But it also means your agent is calling tools it has never seen before, with validation it doesn't control.

When MCP Falls Short

Here's what we discovered building enterprise AI agents at QuantumFabrics: MCP's flexibility becomes a liability when you need consistency.

The Security Problem

July 2025 Knostic research found ~2,000 MCP servers exposed without authentication. MCP servers implement their own validation. That means:

- No guarantee of consistent security checks

- Each server trusts its own input validation

- No centralized policy enforcement

For enterprise deployments handling sensitive data, this is a problem.

The HITL Problem

Some operations need human approval: deleting files, sending external emails, modifying production data. MCP is stateless - it doesn't have a built-in mechanism for "pause, get approval, resume."

The Recovery Problem

When an agent crashes mid-operation, can you resume from where it left off? MCP is request/response. There's no checkpoint mechanism.

The Custom Alternative

Here's how we handle tool integration with LangChain + LangGraph:

this.agent = createDeepAgent({

model,

systemPrompt,

tools: [

webSearchTool,

sendEmailTool,

deleteFileTool, // Has HITL interrupt

],

interruptOn: {

delete_file: {

allowedDecisions: ["approve", "reject"],

},

},

middleware: [

modelFallbackMiddleware(fallbackModel1, fallbackModel2),

modelRetryMiddleware({ maxRetries: 2 }),

toolRetryMiddleware({ maxRetries: 3 }),

createErrorRecoveryMiddleware(emailParticipantContext),

],

});

8-Layer Middleware Stack

Every tool call passes through:

- Model fallback - Switch providers if primary fails

- Model retry - Retry with exponential backoff

- Tool retry - Retry failed tool calls

- Rate limiting - Prevent runaway loops

- Empty message detection - Handle silent failures

- Path protection - Prevent access to protected directories

- File injection - Insert uploaded documents into context

- Error recovery - Convert exceptions to agent-recoverable messages

This is impossible with MCP's server-trust model.

Cross-Platform Options

AWS: Bedrock AgentCore Gateway

Amazon's answer is a middleware layer between agents and tools:

- Converts agent requests (MCP) into API/Lambda calls

- Provides centralized auth management

- Supports MCP servers as native targets

Think of it as managed middleware. Good for AWS-native deployments.

GCP: Vertex AI Extensions

Define tools as OpenAPI specs, deploy as Cloud Functions:

- IAM-based authentication

- Automatic schema discovery

- Better Google Workspace integration

But no built-in HITL mechanism.

Open Source: LangChain + LangGraph

What we use. Full control over:

tool()wrapper with Zod validationcreateMiddleware()for pre/post hooks- PostgreSQL checkpointer for state recovery

- LangGraph interrupt for HITL

Hybrid: LangChain-MCP Adapters

Best of both worlds with langchain-mcp-adapters:

- Use MCP for external tools (dynamic discovery)

- Use LangChain for internal tools (type safety, middleware)

- Bridge between ecosystems

Decision Framework

Use MCP when:

- Building plugin ecosystems

- Integrating multi-vendor tools

- Need cross-language interoperability

- Dynamic tool discovery is important

Use custom when:

- Enterprise security requirements

- Need centralized middleware

- HITL for dangerous operations

- Checkpoint-based recovery

- Type safety at compile time

Key Takeaways

- MCP's flexibility trades off against consistency

- Enterprise agents often need middleware that MCP doesn't provide

- The question isn't "MCP or custom?" - it's "where do you need flexibility vs. control?"

- Hybrid approaches (LangChain-MCP adapters) can give you both

Sources:

- MCP Enterprise Adoption Guide

- AWS AgentCore Gateway Blog

- LangChain MCP Comparison

- Anthropic MCP Announcement

Sources

- MCP Enterprise Adoption Guide - 97M+ downloads, enterprise adoption patterns

- AWS AgentCore Gateway - AWS middleware approach

- LangChain MCP Security - Security comparison

- Anthropic MCP - Original announcement